Is AI in a 'Mainframe Era' That Will Shift to a 'PC Era' Due to Economics?

Current trends in AI infrastructure economics suggest significant growth ahead. Industry analysts project that the aggregate annual AI infrastructure commitment from the five largest US cloud and technology companies could increase from approximately $380 billion in 2025 to an estimated $660-690 billion by 2026. Yet beneath these projections lies a fundamental question: Are we witnessing the AI equivalent of the mainframe era, and will economic realities force a shift to distributed, democratized computing?

The parallels to computing's past are notable. IBM's System/360 family, introduced in 1964, was designed to handle both commercial and scientific applications, offering a 50-fold performance range across six processor models. Today's AI data centers are filling the exact same functional role, scaled by orders of magnitude.

The Mainframe Mirror: Centralized AI Infrastructure

Today's AI infrastructure bears resemblance to the mainframe era of the 1960s and 1970s. The scale is incomprehensible by 1968 standards, but the organizational logic remains: centralize computational resources, run them at maximum utilization, and pipe in problems that exceed human processing capacity.

The economics show similarities. Organizations are hitting a tipping point where on-premises deployment may become more economical than cloud services for consistent, high-volume workloads. This may happen when cloud costs begin to exceed 60% to 70% of the total cost of acquiring equivalent on-premises systems, making capital investment more attractive than operational expenses for predictable AI workloads.

Just as mainframes were accessible only to large institutions with substantial capital, today's AI capabilities remain concentrated among hyperscalers. In the public markets, hyperscaler companies are eating into their cash hoards and seeking alternative forms of financing to fund their AI CAPEX (capital expenditures) plans. Industry estimates suggest the top hyperscalers are collectively planning massive infrastructure investments in the coming years.

Unsustainable Unit Economics Drive Change

The economic pressures building today mirror those that eventually broke the mainframe monopoly. Training gets the headlines, yet inference—the continuous, recurring cost of serving that model in production—crushes budgets at potentially 15-20x the training expense. Industry analyses suggest that major language models could see inference costs far exceed their initial training investments over time.

This economic reality is creating challenging dynamics. When metered billing is applied to an infrastructure stack that sits idle 95% of the time, the cost per useful token becomes a line-item emergency the moment a project moves into production. Enterprises locked in GPU capacity during the AI scramble. Now utilization sits at 5% and the bill is due.

Industry estimates suggest potential savings for organizations willing to invest in dedicated infrastructure. For sustained, high-utilization workloads, on-premises may deliver substantially lower cost per million tokens compared to cloud IaaS and commercial GenAI APIs. The on-premises breakeven point against cloud is estimated at less than 4 months for high-utilization scenarios, a dramatic shift from the 12-18 month cycles of the previous generation.

The Edge Revolution: Specialized Chips Enable Distributed AI

While centralized AI infrastructure faces economic challenges, edge computing and specialized chips are laying the groundwork for AI's potential "PC moment." Neural Processing Units (NPUs) and specialized AI accelerators are now standard in everything from smartphones to industrial equipment. These chips are designed specifically for AI workloads, delivering up to 10 trillion operations per second while consuming just 2.5 watts of power. That's at least six times more efficient than traditional CPUs and mainstream GPUs for neural network tasks.

The technology has reached a notable inflection point. The chips are finally inside everything. Dedicated neural processing units — silicon specifically designed for AI math — are now standard components in consumer smartphones, laptops, and a growing share of industrial hardware. A couple of years ago, an NPU was a premium feature. Now it's just part of the spec. That means there's an enormous installed base of hardware that can genuinely run capable on-device AI without heroic engineering effort.

The models shrank, but not in a way that broke them. Compression techniques — quantization, pruning, knowledge distillation — have gotten much better. Small language models in the 1-7 billion parameter range now handle real tasks competently on normal consumer devices.

Market Forces Driving Democratization

The same market forces that drove the mainframe-to-PC transition are building momentum in AI. This decentralization of AI mirrors the shift from mainframe to personal computing or the rise of cloud computing, each democratizing access to computational power in different ways. It democratizes access to advanced AI capabilities, moving them from the exclusive domain of hyperscale data centers to billions of everyday devices. This transition is akin to the personal computing revolution, where computational power became accessible to individuals, or the cloud computing era, which provided scalable infrastructure on demand.

Cost pressures are accelerating this democratization. SemiAnalysis estimates that Google could cut its cost per computation relative to Nvidia by 62% with its own TPU for internal workloads. This mirrors how PC manufacturers eventually offered better price-performance than mainframes for many workloads.

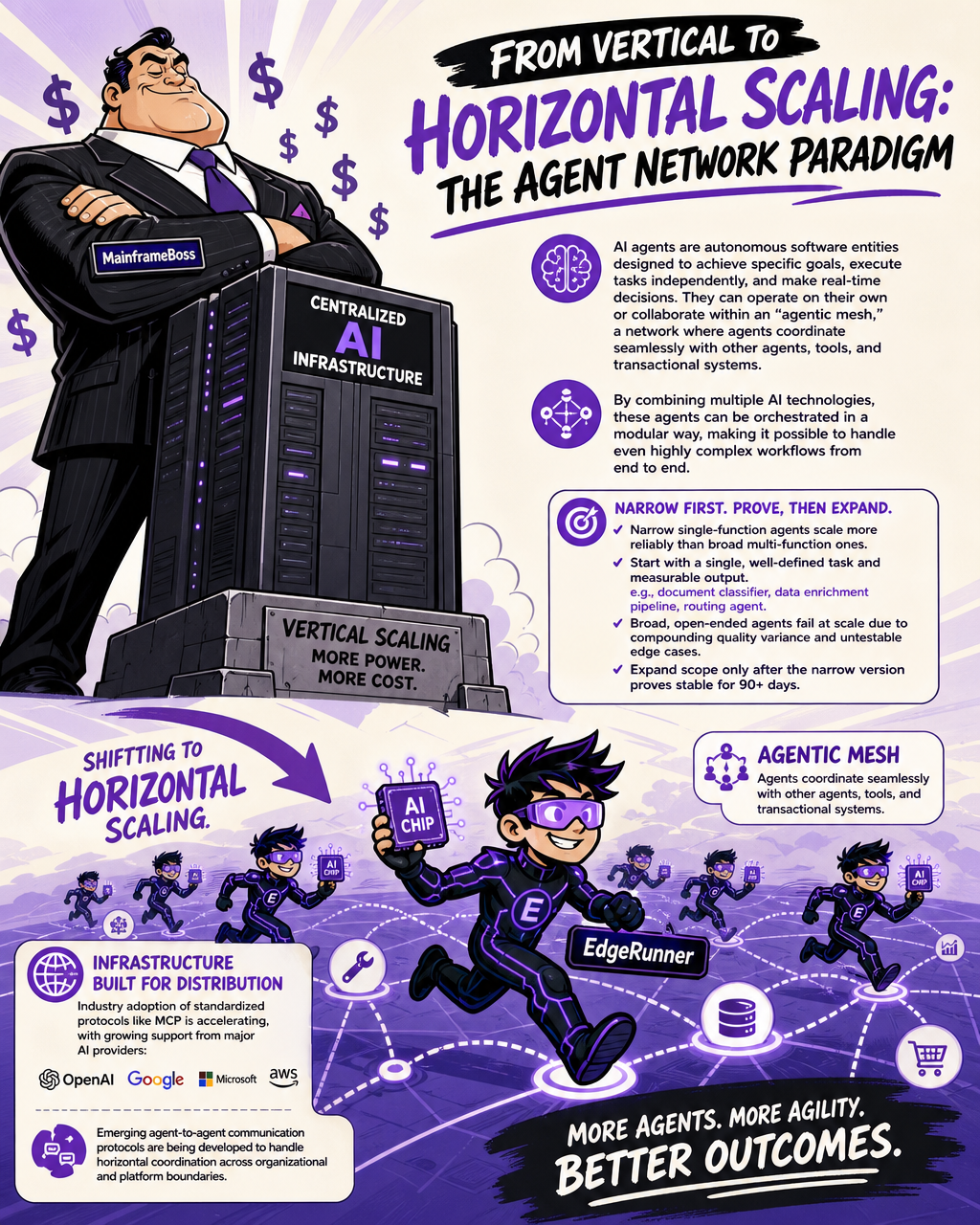

From Vertical to Horizontal Scaling: The Agent Network Paradigm

Perhaps the most compelling parallel to the mainframe-PC transition lies in the shift from vertical to horizontal scaling. AI agents are autonomous software entities designed to achieve specific goals, execute tasks independently, and make real-time decisions. They can operate on their own or collaborate within an "agentic mesh," a network where agents coordinate seamlessly with other agents, tools, and transactional systems. By combining multiple AI technologies, these agents can be orchestrated in a modular way, making it possible to handle even highly complex workflows from end to end.

This represents a fundamental architectural shift. Narrow single-function agents scale more reliably than broad multi-function ones: Successful deployments started with agents scoped to a single, well-defined task with measurable outputs — a document classifier, a data enrichment pipeline, a routing agent. Agents designed to handle broad, open-ended tasks failed at scale due to compounding quality variance and untestable edge cases. Scope expansion happened only after the narrow version proved stable for 90+ days.

The infrastructure is evolving to support this distributed approach. Industry adoption of standardized protocols like MCP is accelerating, with growing support from major AI providers including OpenAI, Google, Microsoft, and Amazon. Emerging agent-to-agent communication protocols are being developed to handle horizontal coordination across organizational and platform boundaries.

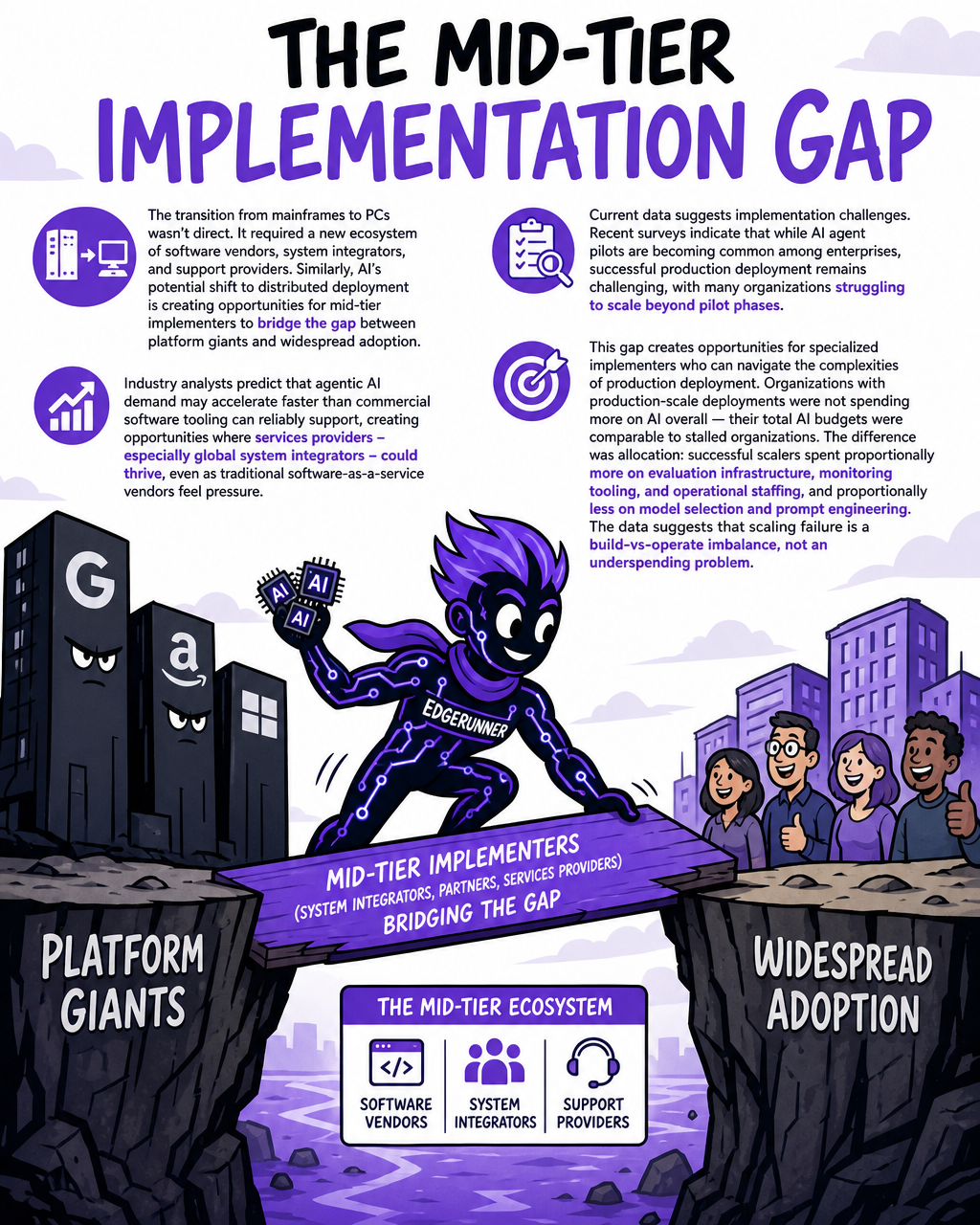

The Mid-Tier Implementation Gap

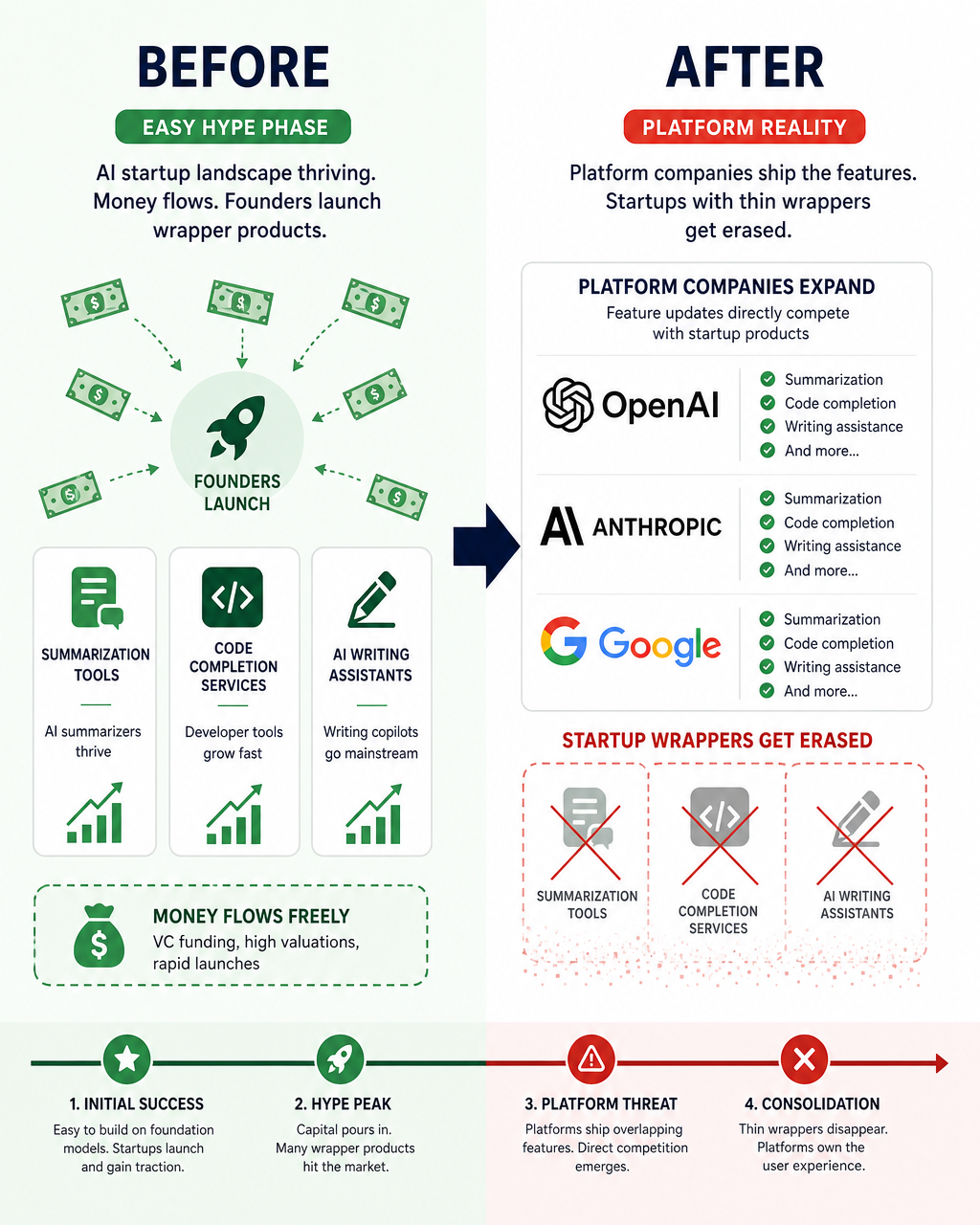

The transition from mainframes to PCs wasn't direct. It required a new ecosystem of software vendors, system integrators, and support providers. Similarly, AI's potential shift to distributed deployment is creating opportunities for mid-tier implementers to bridge the gap between platform giants and widespread adoption.

Industry analysts predict that agentic AI demand may accelerate faster than commercial software tooling can reliably support, creating opportunities where services providers – especially global system integrators – could thrive, even as traditional software-as-a-service vendors feel pressure.

Current data suggests implementation challenges. Recent surveys indicate that while AI agent pilots are becoming common among enterprises, successful production deployment remains challenging, with many organizations struggling to scale beyond pilot phases.

This gap creates opportunities for specialized implementers who can navigate the complexities of production deployment. Organizations with production-scale deployments were not spending more on AI overall — their total AI budgets were comparable to stalled organizations. The difference was allocation: successful scalers spent proportionally more on evaluation infrastructure, monitoring tooling, and operational staffing, and proportionally less on model selection and prompt engineering. The data suggests that scaling failure is a build-vs-operate imbalance, not an underspending problem.

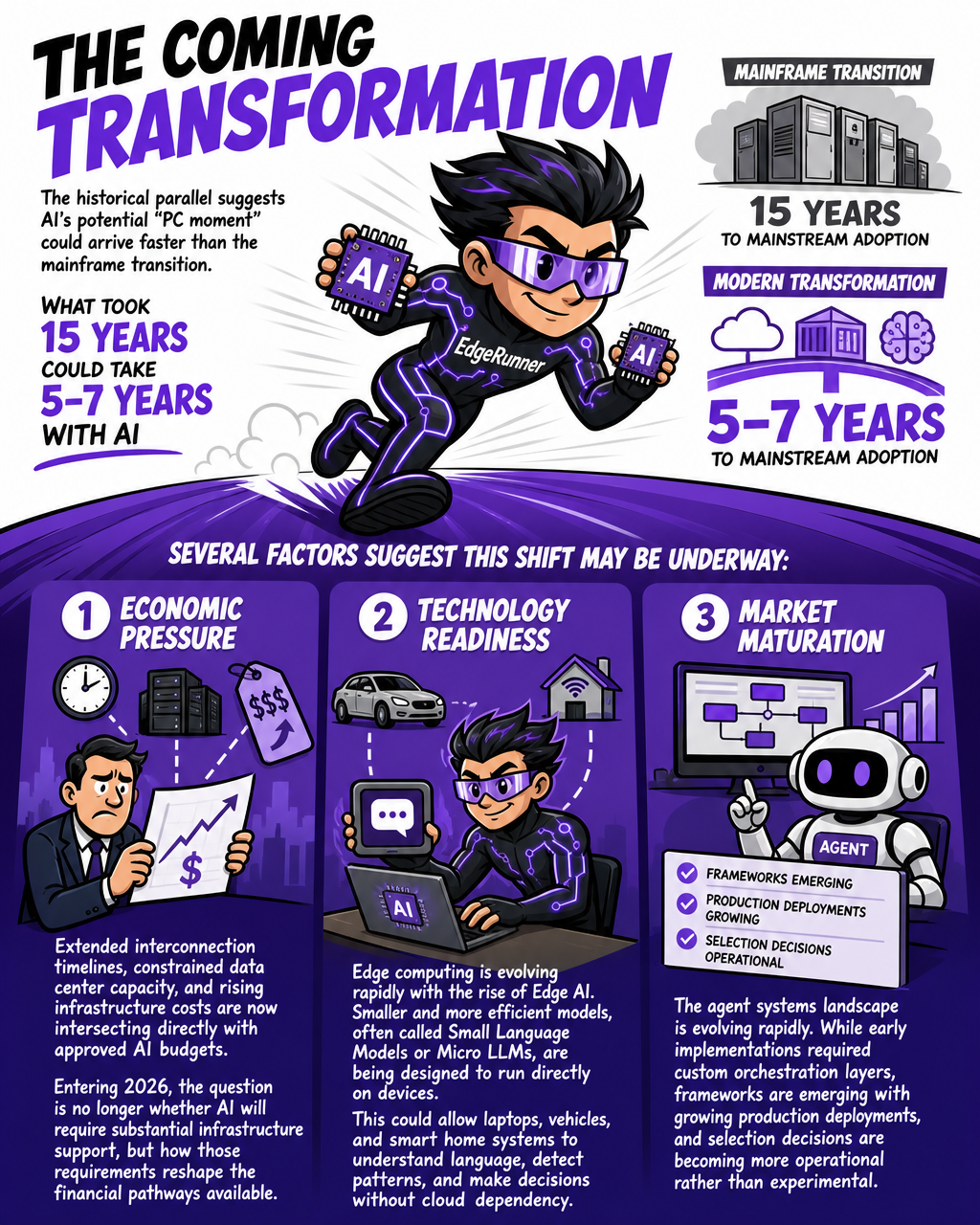

The Coming Transformation

The historical parallel suggests AI's potential "PC moment" could arrive faster than the mainframe transition. The addition of AI to today's transformation equation could potentially compress what historically was a 15-year cycle to 5–7 years while delivering even greater benefits. While the mainframe transition required 15 years from introduction to mainstream adoption, modern transformations may compress to 5–7 years due to cloud infrastructure, containerization, and AI capabilities.

Several factors suggest this shift may be underway:

-

Economic pressure: Extended interconnection timelines, constrained data center capacity, and rising infrastructure costs are now intersecting directly with approved AI budgets. Entering 2026, the question is no longer whether AI will require substantial infrastructure support, but how those requirements reshape the financial pathways available.

-

Technology readiness: Edge computing is evolving rapidly with the rise of Edge AI. Smaller and more efficient models, often called Small Language Models or Micro LLMs, are being designed to run directly on devices. This could allow laptops, vehicles, and smart home systems to understand language, detect patterns, and make decisions without cloud dependency.

-

Market maturation: The agent systems landscape is evolving rapidly. While early implementations required custom orchestration layers, frameworks are emerging with growing production deployments, and selection decisions are becoming more operational rather than experimental.

Conclusion: The Economics of Possibility

The parallels between today's AI infrastructure and the mainframe era aren't merely historical curiosities. They reveal economic and technological patterns that could shape AI's future development. However, this analogy has limitations—AI workloads may require different infrastructure approaches than traditional computing, and centralized systems offer advantages in coordination, security, and specialized hardware that distributed systems struggle to match.

While the mainframe-to-PC transition provides a useful framework for understanding potential developments in AI infrastructure, the outcome isn't predetermined. Economic pressures, technological advances, and market dynamics will ultimately determine whether AI follows a similar path toward democratization and distribution.

Supramono

Your AI venture engine — discover, build, sell

Your AI venture engine — discover, build, sell

Learn more about Supramono and get started today.

Visit Supramono